Bash Script To Get AWS EC2 Tag Value For A Running Instance

Category : How-to

Here is a Bash script for getting a tag value from within a running EC2 Instance.

For more information on AWS EC2, please see: https://aws.amazon.com/ec2/

If you’re using one of the standard AWS EC2 images, such as Ubuntu, then you’ll have everything you need already installed. Thankfully, Amazon installs some tooling on your host that’ll help you interact with the AWS fabric.

Create a bash file with your favourite text editor.

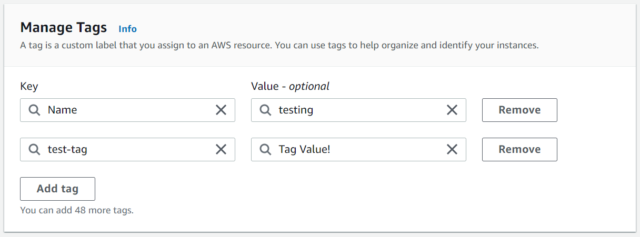

vi get-tag.shPaste the following script into the bash file. You’ll need to change test-tag in the below script to the tag Key name you’ve defined for the EC2 instance.

TAG_NAME=tag-name

INSTANCE_ID=$(ec2metadata --instance-id)

REGION=$(ec2metadata --availability-zone | sed 's/.$//')

TAG_VALUE=$(aws ec2 describe-tags --filters "Name=resource-id,Values=$INSTANCE_ID" "Name=key,Values=$TAG_NAME" --region=$REGION --output=text | cut -f5)The value of the tag is now available to use in the variable $TAG_VALUE. Add an echo to the end of your script for now to see it in action.

echo $TAG_VALUEMake the file executable and run it to see the output.

chmod +x get-tag.sh

./get-tag.sh

Tag Value!